ARTICLE AD BOX

Fifty years since a hand-soldered circuit board sold for $666.66, Apple has gone from a Los Altos garage to the most valuable company on earth. Its story isn't one of products built—it's of how every single product redrew the boundaries of what technology could be. The Apple II, Macintosh, iPhone, and eight others didn't just open new markets. They made the old ones obsolete.

Apple turned 50 this year. Half a century since Steve Jobs and Steve Wozniak sold a Volkswagen bus and a programmable calculator to fund a company in a garage in Los Altos, California. Fifty years since a hand-soldered circuit board called the Apple I went on sale for $666.66 at the Byte Shop in Mountain View.In that time, Apple has gone from two guys in a garage to the most valuable company on the planet. It has nearly died—90 days from bankruptcy, by some estimates, in 1997. It has lost its founder, fired its founder, brought him back, lost him again. It has made products that flopped spectacularly (the Newton, the G4 Cube, the butterfly keyboard) and products that reshaped civilisation.But here's the thing about Apple's legacy. It's not really about what the company built.

It's about what it destroyed. Every defining Apple product didn't just create a new market—it rendered something else obsolete. A design convention. A product category. An entire industry. Sometimes several at once.This is a list of the ten Apple products that did the most damage—and built the most future. Not the ten best, or the ten most popular, or the ten most profitable. The ten most consequential. The ones that changed how the rest of the tech industry thought about what was possible, necessary, or acceptable.

The ones that drew a line between before and after.

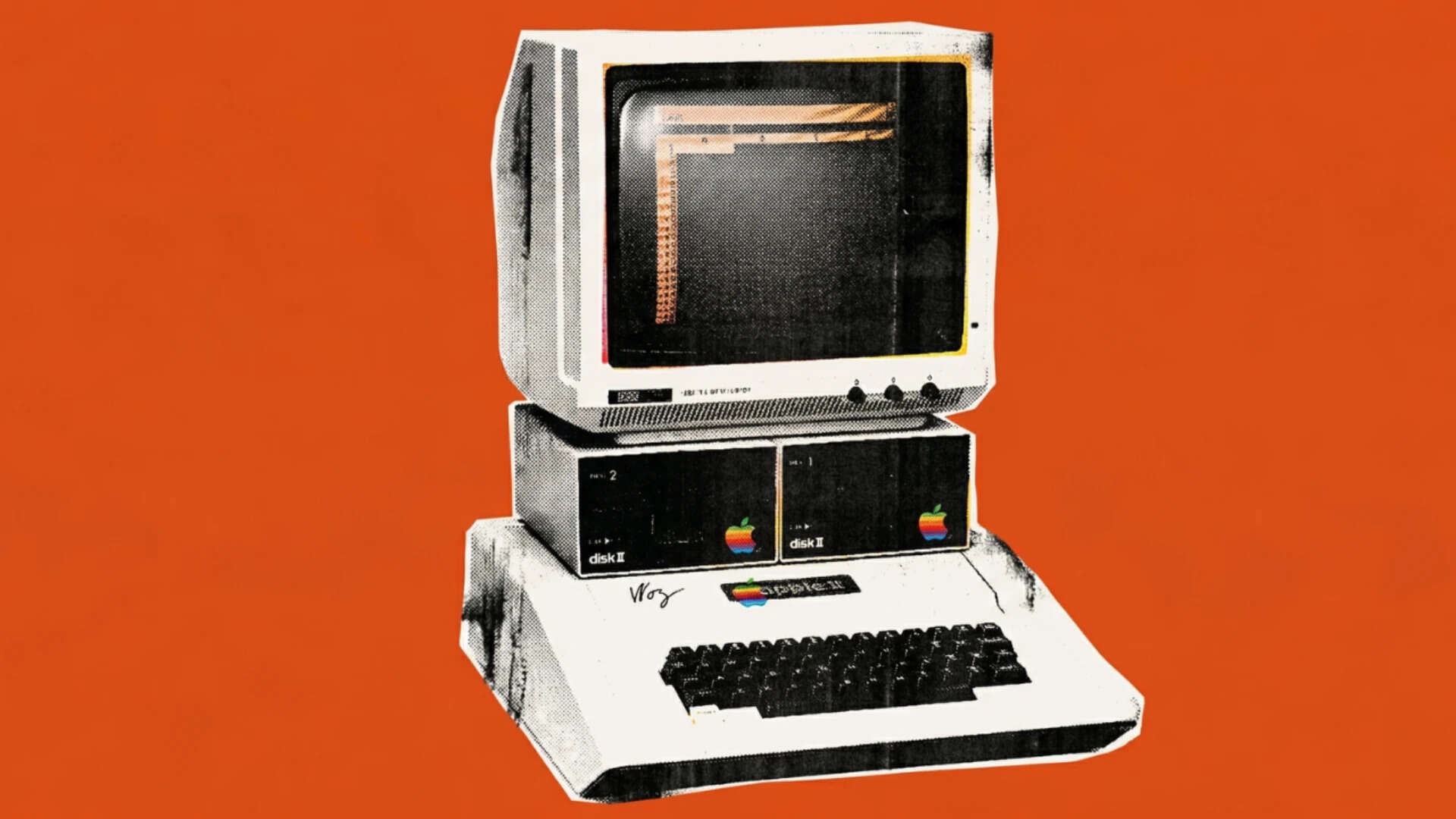

Apple II: Before this, computers didn't belong to regular people

Before the Apple II, computers lived in offices and universities. They hummed behind closed doors. Regular people didn't touch them, didn't think about them, didn't want them. The idea of a computer in someone's kitchen was absurd—like suggesting every home needed a printing press.Steve Wozniak didn't see it that way. He wrote in Byte magazine in 1977 that a personal computer should be "small, reliable, convenient to use, and inexpensive."

Simple enough. Nobody else was building one that ticked all four boxes.The Apple II debuted at the West Coast Computer Faire in April 1977 and went on sale that June for $1,298. It came in a plastic case—designed by Jerry Manock—with a built-in keyboard. Plug it in, connect it to your TV, and go. No soldering. No wiring diagrams. No engineering degree required. It could display colour graphics, which was radical enough that Apple redesigned its logo into a rainbow stripe just to show it off.But here's what really mattered: it had eight expansion slots. Wozniak's open architecture meant anyone could build accessories for it. Printers, modems, memory boards, hard drives—the Apple II became a platform, not just a product. And when VisiCalc, the first spreadsheet program, launched exclusively on Apple II in 1979, the machine suddenly had a reason to exist in every small business in America. Wozniak later said small businesses bought 90% of Apple IIs—not the hobbyists he and Jobs had expected.

Sales went from $775,000 in 1977 to $118 million by 1980.The Apple II didn't just sell well. It buried the idea that computers were specialist equipment for specialists. After 1977, they were appliances. Consumer goods. Things normal people bought for normal reasons. The hobbyist era was over before most people even knew it had started.

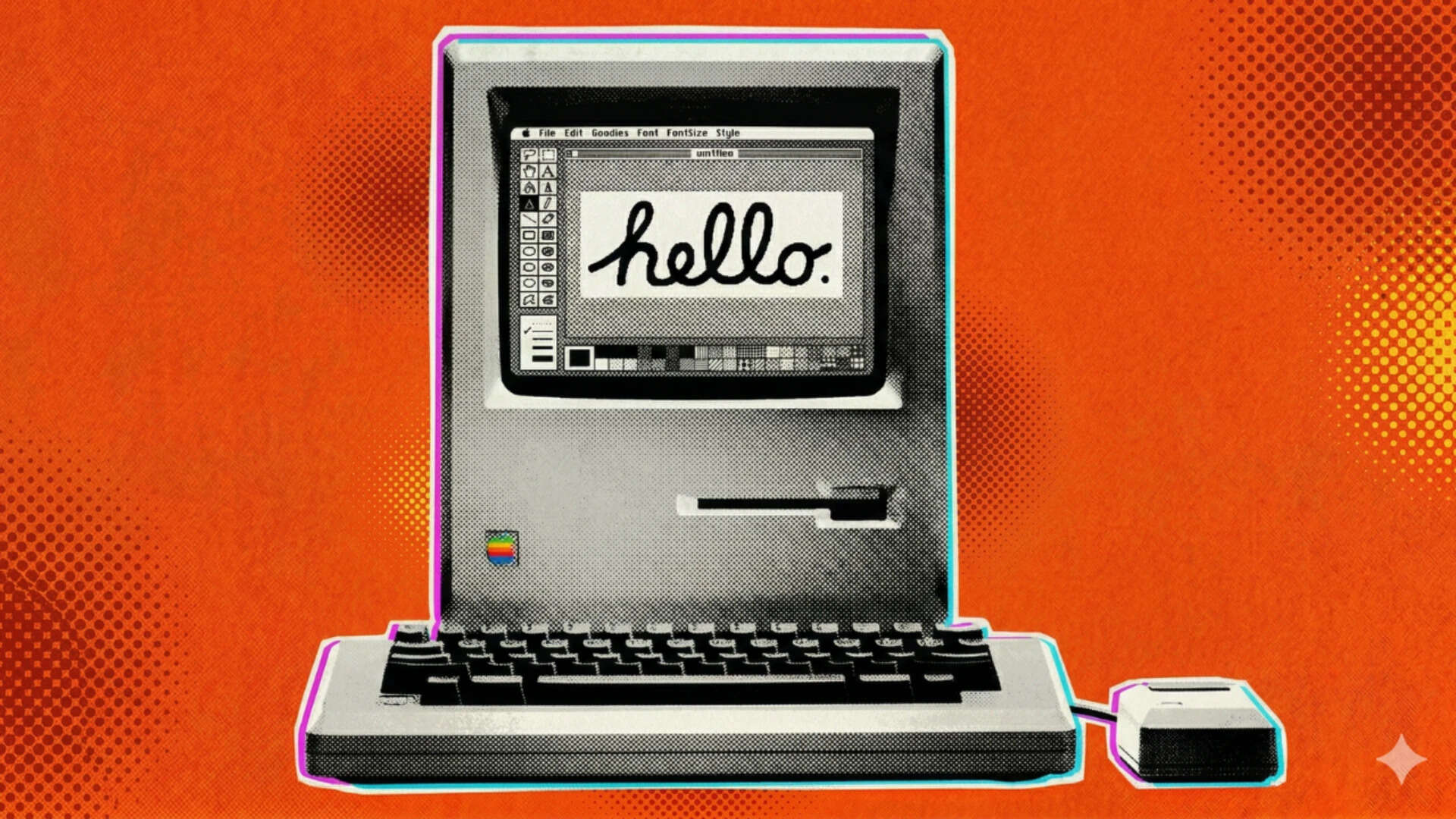

Macintosh: Every desktop you've ever used started here

Before January 24, 1984, using a computer meant typing. Every command, every file, every function—entered as text, executed on faith, interpreted by nobody but the machine.

The graphical user interface existed in research labs. Xerox had invented it at PARC. Nobody had made it a product regular people could actually use.The original Macintosh changed that in 90 seconds. The Super Bowl ad—directed by Ridley Scott, aired exactly once—told you all you needed to know: there was a before, and there was now. The Mac itself did the rest. A 9-inch screen. A mouse. A desktop with folders and windows and a trash can.

You pointed at what you wanted. You clicked. The computer responded. No manual required.It wasn't the first machine to use a GUI—Apple's own Lisa had launched a year earlier at $9,995, and promptly died. But the Mac cost $2,495, fit on a desk, and came with MacWrite and MacPaint baked in. For the first time, a computer could show you what your document would look like before you printed it. WYSIWYG—what you see is what you get—wasn't just a feature.

It was a philosophical shift in who computers were for.The Mac nearly failed anyway. Sales stalled after the initial frenzy. Jobs was pushed out of the division. But the design paradigm survived him. When Microsoft shipped Windows in 1985—and more successfully in 1990—it was building on the vocabulary the Mac had established: icons, menus, the desktop metaphor. Every operating system in use today, from Windows to Android, descends from what a small team in Cupertino built in 1984.

The command line didn't disappear—engineers still live in it—but it stopped being the only way in. Apple had turned computing into something you could see.

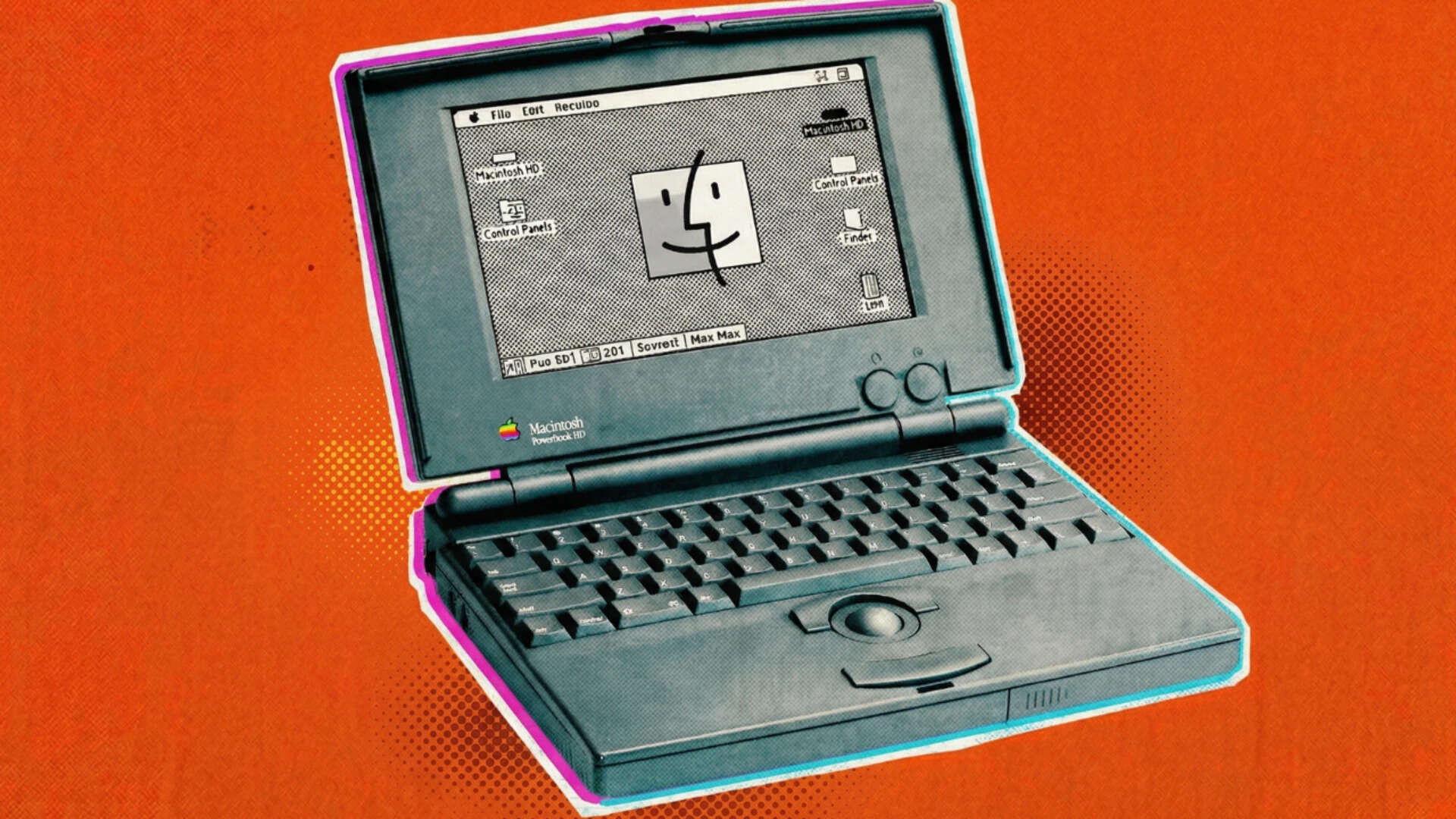

PowerBook: Why your laptop looks the way it does

Go look at any laptop made today. Keyboard pushed to the back. Trackpad in front. Palm rests on either side. That layout didn't exist before October 1991.Before the PowerBook, portable computers were a mess. The keyboard sat at the front edge, your wrists hung off the table, and the pointing device—if there was one—clipped awkwardly to the side.

Apple's own Macintosh Portable, launched in 1989, weighed 7.2 kg. It was portable the way a bowling ball is portable.The PowerBook changed everything. Apple's industrial designer Robert Brunner pushed the keyboard to the rear and planted a trackball in the open space below it. It sounds obvious now. It wasn't obvious then. Every DOS laptop had the keyboard forward, with empty space behind it often used for function-key reference cards.

Brunner said he designed the PowerBook "so it would be as easy to use and carry as a regular book.

"Here's a detail most people don't know: Apple didn't even build the PowerBook 100 in-house. The company sent its schematics to Sony, which miniaturised and manufactured the thing in under 13 months. Sony's president, Norio Ohga, gave project manager Kihey Yamamoto permission to pull engineers from any division in the company.

They cancelled other projects to get it done.The PowerBook line generated over $1 billion in its first year. Apple leapfrogged Toshiba and Compaq to become the market leader in portable computing. More importantly, by mid-decade, every laptop maker on the planet had copied the layout. The keyboard moved back. The trackpad moved forward. The palm rests appeared. Nobody asked permission. Nobody credited Apple. They just quietly adopted the template, and that template hasn't changed in 35 years.

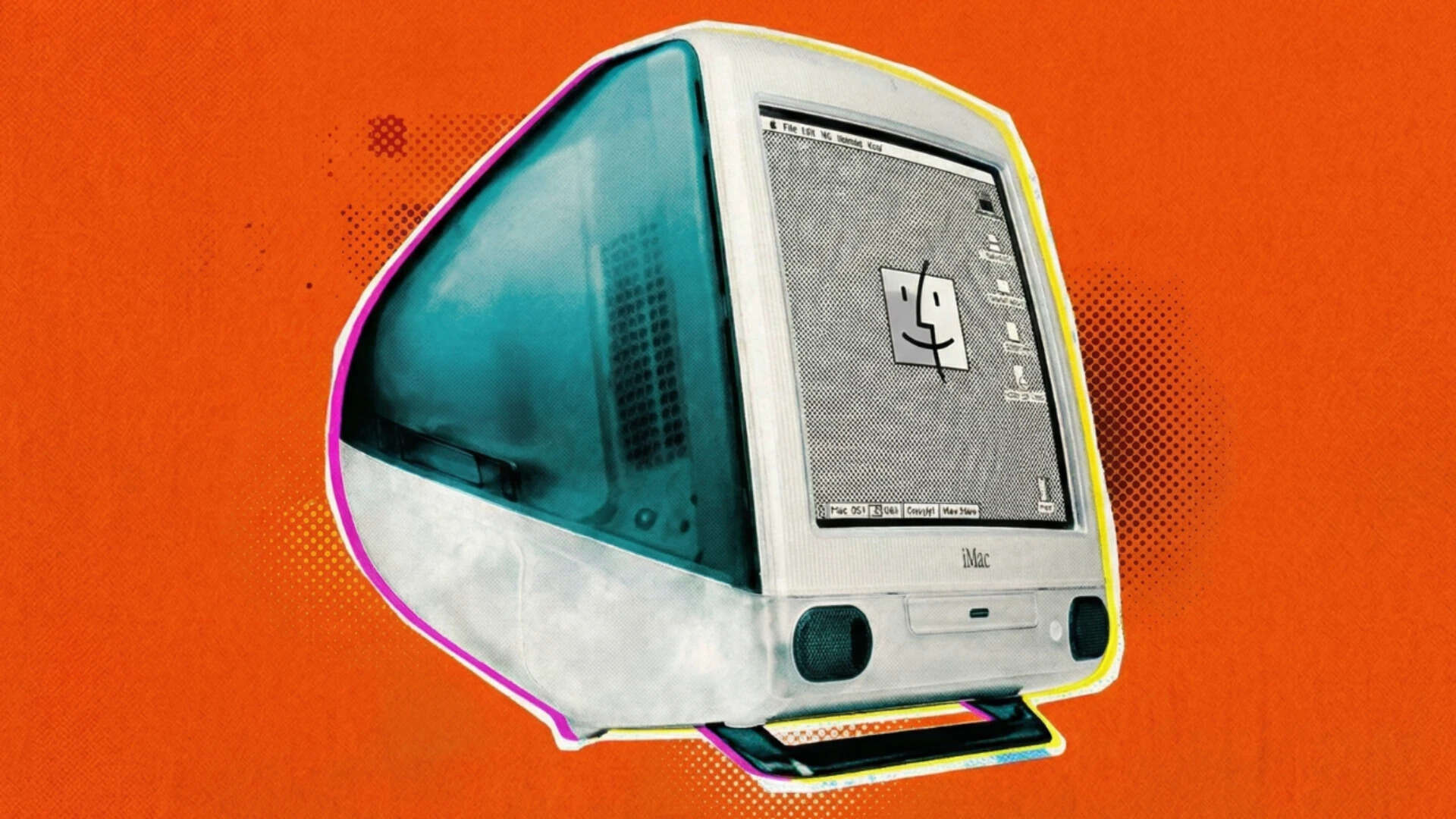

iMac: Bondi Blue and the end of the beige box era

By 1997, Apple was bleeding. The company lost $878 million that year. Experts weren't debating whether Apple would die—they were debating when. Steve Jobs had just returned after being fired a decade earlier, and he needed a hit. Fast.What he got was the iMac. Unveiled on May 6, 1998, it looked like nothing the industry had ever produced. A bulbous, translucent, Bondi Blue shell—named after the water at Sydney's Bondi Beach—housing a 15-inch CRT, a G3 processor, and a built-in modem.

No floppy drive. No SCSI ports. No ADB. Just two USB ports, at a time when USB was a languishing standard nobody bothered with.Jony Ive's design team had tested solid plastics but thought they looked cheap. Translucent was the answer—cheeky, playful, nothing like the grey rectangles filling every office and home. The designers brought random translucent objects to the studio for inspiration. One was a piece of greenish-blue beach glass.

That became the colour.Before the iMac, every computer on the market looked like every other computer on the market. Beige boxes with beige monitors and beige keyboards. Functional. Forgettable. The iMac ended that overnight. It sold 278,000 units in its first six weeks. 800,000 in its first 20 weeks. Nearly half the buyers were first-time computer owners. Apple went from an $878 million loss in 1997 to a $414 million profit in 1998.The ripple effects went further than sales. USB became a universal standard because Apple forced the industry's hand. The floppy disk began its slow death. And the "i" prefix—originally standing for "internet"—became the most valuable letter in tech branding, spawning the iPod, iPhone, iPad, and about ten thousand startup names that didn't survive.

iPod: A thousand songs, a scroll wheel, and the beginning of everything

By 2001, Apple was stable but still a computer company. The iPod changed that sentence permanently.Launched on October 23, 2001, the first iPod held 1,000 songs on a 5GB hard drive and connected to your Mac via FireWire. It cost $399, it had a mechanical scroll wheel, and Steve Jobs sold it with five words: "1,000 songs in your pocket."The iPod wasn't the first MP3 player. Creative, Diamond, and others had been selling them for years. But those devices were clunky, held a few dozen songs, and had interfaces that felt like navigating a tax form.

The iPod's scroll wheel made browsing music feel physical—intuitive in a way no one had achieved before.What made the iPod truly consequential wasn't the hardware. It was iTunes. Apple built a legal digital music store when the industry was drowning in piracy, and suddenly there was a legitimate, frictionless way to buy a song for 99 cents. The iTunes Store became the world's largest music retailer. The CD—a format that had dominated music for two decades—started its decline the moment buying a single track became easier than driving to a record store.The white earbuds became a status symbol for an entire generation. The Discman became a relic. And more quietly, the iPod proved something critical inside Apple: that the company could dominate a market it had never competed in before. That confidence—and the ecosystem thinking behind iTunes—became the blueprint for everything that followed.

iPhone: The device that turned a phone into everything

On January 9, 2007, Steve Jobs walked onto a stage in San Francisco and said Apple was introducing three products: a widescreen iPod with touch controls, a revolutionary mobile phone, and a breakthrough internet communicator.

Then he repeated it. Three products. iPod. Phone. Internet device. The audience caught on. It was one device.The iPhone wasn't the first smartphone. BlackBerry owned the business market. Nokia dominated globally. Windows Mobile existed. But every one of those devices was built around a physical keyboard and a stylus-driven interface designed by engineers for engineers. The iPhone had no keyboard. No stylus. Just a 3.5-inch capacitive touchscreen and a single home button.Jobs was explicit about why: "Who wants a stylus? You have to get them, put them away, you lose them. Yuck." He was right. Within a year, every phone manufacturer was scrambling to build a touchscreen rival. Within five, the flip phone was a museum piece. BlackBerry, which had seemed invincible, entered a death spiral it never recovered from. Nokia, which sold more phones than anyone on earth, was bought by Microsoft in a fire sale.The App Store, which launched in 2008, completed the transformation. It turned the iPhone from a phone into a platform—a pocket computer that could be anything. A camera. A GPS. A game console. A wallet. A medical device. The point-and-shoot camera, the standalone GPS unit, the MP3 player, the PDA, the alarm clock, the pocket calculator—the iPhone absorbed them all. No single product in history has made more categories obsolete.

MacBook Air: The envelope that ended an era

Steve Jobs pulled it out of a manila envelope.That was the entire pitch at Macworld 2008. The MacBook Air—tapered to a barely-there 0.4 cm at its thinnest point, weighing 1.36 kg—slid out of an interoffice envelope like a sheet of paper. The audience gasped. The message was clear: this is what a laptop looks like now.The original Air wasn't a powerhouse. It had one USB port, no optical drive, a relatively slow processor, and got lukewarm reviews for its performance.

None of that mattered. The Air redefined the conversation. Before it, laptop makers competed on specs—faster processor, more RAM, bigger hard drive. After the Air, they competed on thinness and weight. Intel created an entire product category—the Ultrabook—specifically in response.Apple had already killed the floppy disk with the iMac a decade earlier. The Air went after the next target: the optical drive. If the thinnest laptop in the world didn't need a disc drive, why should any laptop? CDs and DVDs, already losing ground to digital downloads, never recovered.

Within a few years, most laptop makers had followed Apple's lead and dropped the drive entirely.The design language of the MacBook Air—aluminium unibody, tapered profile, minimalist I/O—became the template for an entire decade of laptop design across every brand. The chunky, port-laden laptop didn't disappear immediately. But it started looking embarrassing.

iPad: Nobody needed a tablet until everyone did

The tablet computer had been tried before. Microsoft pushed Tablet PCs through the early 2000s.

They flopped. Stylus-driven, running full Windows on underpowered hardware, they were neither good laptops nor good tablets. Steve Ballmer famously waved one around at CES. Nobody cared.Then, on January 27, 2010, Steve Jobs sat on a couch onstage and held up a thin slab of glass. The iPad. A 9.7-inch touchscreen running a scaled-up version of iPhone OS, starting at $499. Critics called it a giant iPod Touch. They weren't wrong—and it didn't matter.The iPad created a category that hadn't existed in any meaningful way. It wasn't trying to replace your laptop. It was the thing you reached for on the couch, in bed, at the breakfast table. Reading, browsing, watching, casual gaming—the iPad owned the space between the phone and the computer. Nobody had successfully occupied that gap before.The collateral damage was swift. The netbook—an entire category of cheap, small, underpowered laptops that had been booming just months earlier—collapsed within a single product cycle.

Asus, Acer, and Samsung had bet heavily on netbooks as the next big thing in personal computing. The iPad made them all look like half-measures overnight. The e-reader market, dominated by Amazon's Kindle, also felt the impact—the iPad could do everything a Kindle did and about a thousand things it couldn't.

AirPods: The headphone jack died. These were the eulogy.

When Apple removed the headphone jack from the iPhone 7 in September 2016, the internet lost its mind. Dongle jokes.

Petitions. "Courage" became a meme after Phil Schiller used the word to describe Apple's decision. Two months later, Apple released AirPods—$159 wireless earbuds in a dental floss-sized case—and the outrage slowly turned into adoption.AirPods weren't the first wireless earbuds. Bluetooth headsets had existed for years. But they were fiddly, unreliable, and looked like medical devices. AirPods worked differently.

Pop open the case near your iPhone and a pairing card appeared on screen. Put them in your ears and music played. Take one out and it paused. No setup menus. No Bluetooth scanning. No manual. The W1 chip inside handled everything.The real accomplishment was making wireless audio feel inevitable rather than aspirational. Within two years, AirPods had become the best-selling headphone of any kind in the world. The white stems became as recognisable as the white iPod earbuds had been a decade earlier.

The wired earbud—the kind that tangled in your pocket, snagged on door handles, and frayed at the connector—suddenly felt like a relic from a previous era.

Apple had removed the headphone jack first and then made the case for why it was right. Backwards, aggressive, and ultimately effective.Apple built an entire product line on top of it—AirPods Pro with active noise cancellation, AirPods Max over-ears, spatial audio baked into Apple Music.

What started as a pair of earbuds became the foundation of Apple's wearable audio ecosystem.

Apple Silicon: When Apple decided it didn't need Intel anymore

For 15 years, every Mac ran on Intel processors. Apple was a customer. A very large, very important customer—but still a customer. If Intel's chips ran hot, Apple's laptops ran hot. If Intel fell behind on its manufacturing process, Apple's product roadmap stalled. By the late 2010s, Intel was falling behind badly, and Apple's laptops were paying the price.

The MacBook Pro had become a machine that throttled its own processor to manage heat.Apple had been quietly building the solution since 2008. That's when Johny Srouji—a former Intel and IBM engineer—joined to lead development of the A4, Apple's first custom system-on-a-chip for the iPhone. Over the next decade, his team grew into thousands of engineers across San Diego, Munich, and Israel, steadily making the A-series chips faster, more efficient, and more capable.

By 2020, the iPhone's processor was already outperforming most Intel laptop chips in single-core benchmarks.

The question had shifted from "can Apple build a Mac chip?" to "why hasn't it already?"The M1, unveiled on November 10, 2020, answered that question emphatically. Built on TSMC's 5nm process with 16 billion transistors, it unified the CPU, GPU, Neural Engine, and memory into a single chip. The result was absurd: a fanless MacBook Air that outran the Intel MacBook Pro while lasting nearly twice as long on battery.

The old trade-off—power or efficiency, pick one—simply ceased to exist.The transition was risky. Apple had botched a chip migration before, struggling through painful compatibility issues when it moved to Intel from PowerPC in 2006. Srouji's team even had to redesign their validation process on the fly when COVID-19 hit, using remote cameras instead of engineers huddled over microscopes to inspect early silicon.

By June 2023, the entire Mac lineup had completed the switch to Apple Silicon.

Intel, which had once supplied every Mac processor, was out.The M1 didn't just change Apple's laptops. It changed the industry's assumptions about who gets to make processors. Apple proved that a product company—not a chip company—could design silicon that embarrassed the incumbents. The performance-versus-battery-life trade-off that had defined laptop computing for decades was exposed as a limitation of lazy architecture, not a law of physics.Five decades. Ten products. Each one made something new and made something else ancient. That's the pattern Apple has repeated since 1977—not just building the future, but making the present feel suddenly, irreversibly, past.

1 hour ago

5

1 hour ago

5

English (US) ·

English (US) ·