ARTICLE AD BOX

AI vision tools can misidentify objects like Sphynx cats as elephants due to their reliance on surface patterns rather than human-like contextual understanding. This "representational misalignment" poses risks in critical applications. Researchers are exploring solutions by training AI on human similarity judgments to foster relational understanding.

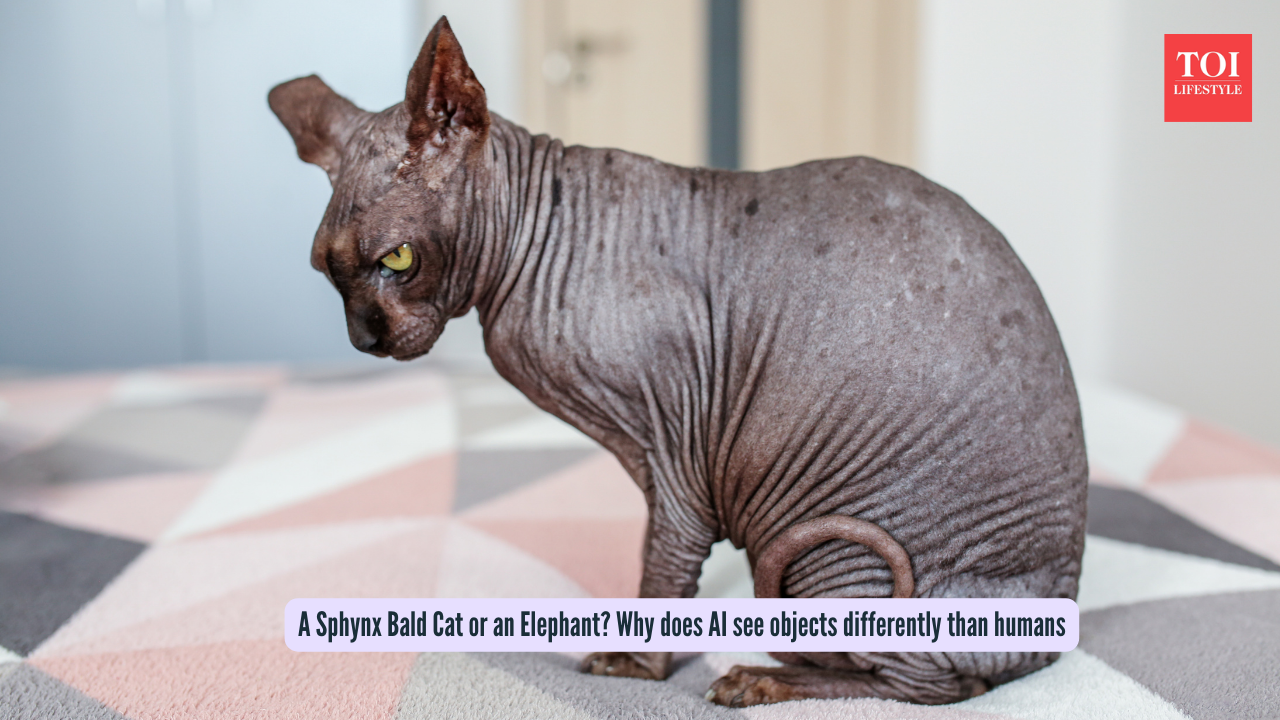

Ever saw a photo of a bald Sphynx cat and thought, "Cute kitty!"? The brain connects with that instantly. But when the same photo is given as input to some AI vision tools, it might call it an elephant. Weird, right? It's not a glitch, it's a perspective into how machines "see" the world differently from us humans.We're wired to spot shapes, context, and meaning in a flash, but AI? Not so much. It often depends upon surface tricks like textures or pixel patterns, missing the big picture. This mismatch isn't just throught provoking, it could also mean trouble in self-driving cars spotting wrong signs or doctors scanning X-rays.

A Sphynx Bald Cat or an Elephant Why does AI see objects differently than humans

How does AI perceives things differently from humans?

According to a research published in a Nature study, "The kinds of mistakes an AI makes reveal how it organizes visual information." A shape-focused AI might mix up the cat with a tiger, but when the output is an elephant? That could be problematic.As the authors explain, "Vision isn’t a camera, passively recording the world." Our brains adapt, packing a box prioritises size, kitchen storage groups by function. Context and goals is what sets human beings apart.

AI systems, trained just to match labels, often take superficial shortcuts. When learning to identify a "cat" or "elephant," they overlook context and connections, such as habitats or relationships between concepts. This "representational misalignment", a mismatch in how information is organized, differs from value alignment, which focuses on ensuring AI pursues human-intended goals.

Solutions might be in the pipeline

By training AI on human similarity judgments, for instance, deciding whether a mug resembles a glass or a bowl more, it begins to grasp relational structures, much like our adaptable thinking.

As said in The Conversation article, "Including this data during training encourages AI systems to learn how objects relate to one another."

Alignment is more important than vision

This kind of representational alignment extends far past visual tasks, drawing keen interest from AI researchers. With AI helping critical decisions, gaps in how machines and humans structure information carry serious risks, even for precise looking systems.

1 hour ago

5

1 hour ago

5

English (US) ·

English (US) ·