ARTICLE AD BOX

In the age of AI, we are no longer building or investing in relationships. We are configuring it. Let’s explain how. Not too long ago we used to stand in front of mirrors for hours before a date, changing from a sexy dress to a semi-casual shirt-skirt option; or go for the age-old white shirt and blue jeans combo after quite a few rehearsals that would make our closets shy away from us.

Most of the time, we would get our “look” right. Some things don’t fail. But for other more difficult questions about human emotions, AI is providing the answers these days. This should not come as a surprise.

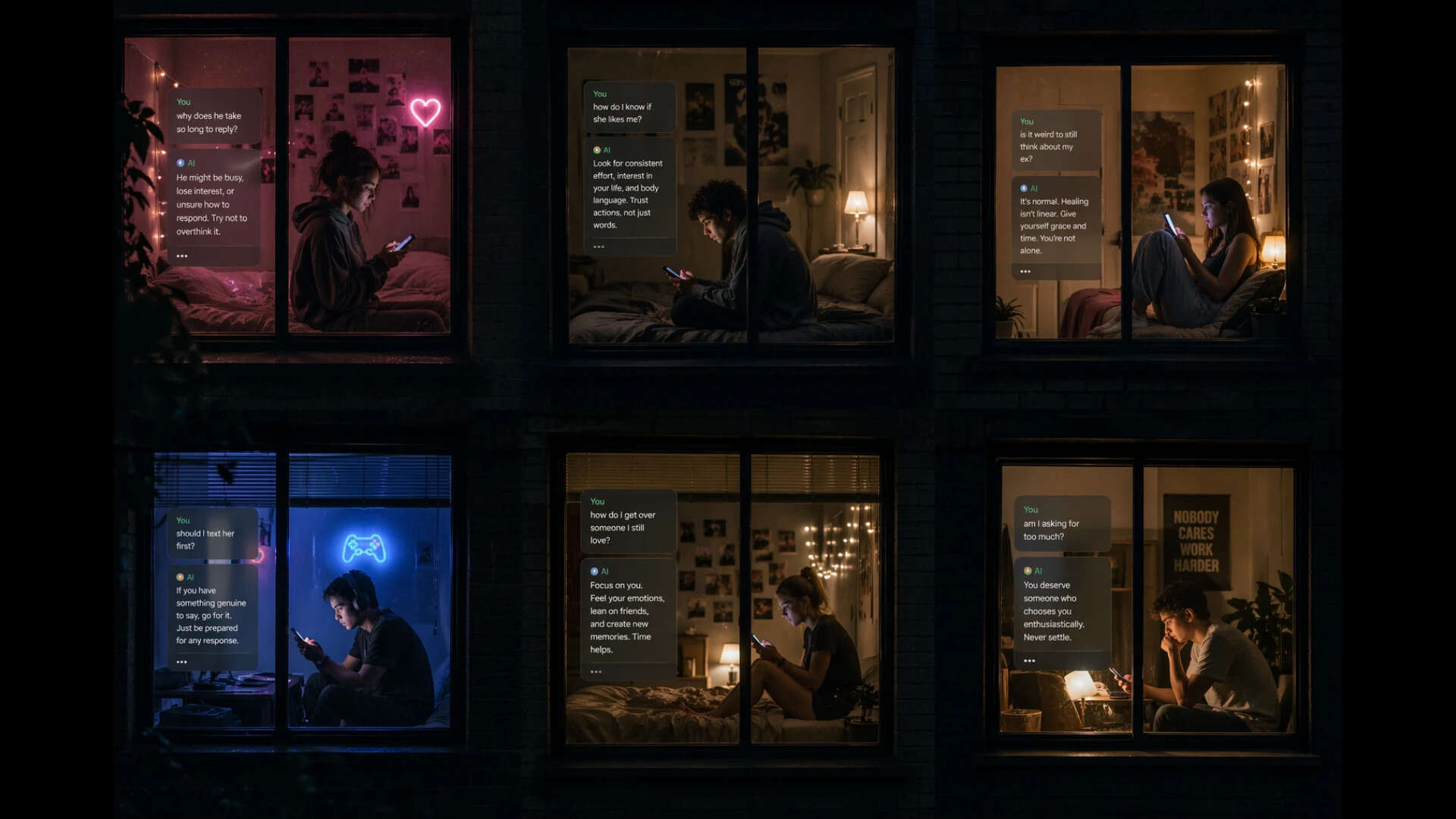

A growing number of Gen Zs are turning to AI chatbots for emotional labour. We are witnessing the early stages of what researchers are beginning to call “social offloading”: the transfer of human interaction itself onto machines. (AI generated)

Not too long ago, we outsourced memory to our phones. Birthdays (smartphones), directions (GPS), even basic arithmetic (calculator to smartphones), were all handed over without much thought. It felt efficient, even liberating.

Now, something more intimate is slipping out of human hands. Conversation, care and connection. For instance, Ishan Das, 35, an accounting executive at a Big 4, recently got married to his college sweetheart. So far, so good. But then came the daily grind of running a house with someone close. “That’s a whole new sphere to navigate, and I just wasn’t prepared,” said Das, explaining why he offloaded questions like—“Should I ask to help with kitchen chores every day, after we get home from work?”, “Is it rude to always insist on driving if we are going somewhere together?”—to AI.

Like Ishan, a growing number of Gen Zs are turning to AI chatbots for emotional labour. They vent, seek advice, rehearse arguments, confess anxieties, and sometimes just talk. Because AI is always there, always patient, and never inconvenient. What we are witnessing is the early stages of what researchers are beginning to call “social offloading”: the transfer of human interaction itself onto machines. Remember the 2013 movie Her? Just in case you have not watched it, the film is about a lonely artist called Theodore Twombly, played beautifully by Joaquin Phoenix, who buys an Artificial Intelligence system and ultimately finds it difficult to let go even as his real life relationships deteriorate.

The quiet rise of social offloading

Traditional tools like calculators, shopping lists, or calendars were more physical proxies. There was no great risk of offloading work to them. Today, technology has extended this idea into the social realm. Thinking emotionally itself has been outsourced. It’s not one or two questions. It’s everything that this generation thinks is an uncomfortable question. All those Millennial and Gen Z dating terms – ghosting, Banksying, ghostlighting, submarining, etc.

are now being discussed with machines. Because AI (which is always polite) has stepped in where human limitations begin – navigating the complex world of love, dating, friendships, heartache… the whole gamut of emotions we feel, but often can’t deal with.

A growing number of Gen Zs are turning to AI chatbots for emotional labour. They vent, seek advice, rehearse arguments, confess anxieties, and sometimes just talk. Because AI is always there, always patient, and never inconvenient.

And therein lies the problem. With our inability to face hard questions and deal with the inevitability of life’s difficult answers. Psychologist Dhara Ghuntla says, "AI can offer perspective, sure, but it cannot replace the messy, uncomfortable, deeply human work that real relationships demand."

For example, when someone uses AI to write a sensitive message, they are handing over the effort of controlling emotions, understanding another person’s perspective, and managing discomfort in difficult conversations to a machine.

The chatbot isn’t simply answering a query; it is responding to a vulnerability. It reassures, It affirms. And it engages in a way that mimics empathy.For users, especially those navigating loneliness or stress, this can feel like relief. Yet it also raises an uncomfortable question: if machines can simulate emotional understanding convincingly enough, what happens to the human need for messy, imperfect, effortful relationships? Shannon Vallor, American philosopher of technology, and author of The AI Mirror: How to Reclaim Our Humanity in an Age of Machine Thinking, uses the idea of an “AI mirror” to explain how these systems affect all of us.

She says, unlike a human mind, with memory, experience, and real understanding, AI doesn’t truly think. It simply reflects human data back to us. Similar to you talking to that mirror. Only, this time the mirror agrees with all that you say, reinforces your individual thoughts, and throws it back to you. AI, Vallor says, is basically flattery, “feeding our egos and reinforcing a self-centered view of the world.

In this sense, AI isn’t producing original thought; it’s echoing human beliefs, desires, and judgments in complex ways”.At the altar of AI, the first thing we are all sacrificing is our capacity to critically think about complex emotions. Emotions aren’t meant to have easy answers. For centuries, artists have painted, written poetry and prose, sang songs, all to take us through life’s most difficult emotions with ease. To make sure we all understand that everyone, irrespective of age, has experienced anger, heartbreak, loss, grieved over loved ones.

Life's biggest lesson is that life isn't easy.

With AI, there’s no fear of judgment, precisely why it is so powerful for Gen Z. Because once people get used to relationships that demand nothing, real relationships can begin to feel exhausting. (AI generated)

“How to break-up without breaking his/her heart?” Ask AI. Take this up as a task. And you’ll see that depending on your specific question, AI will have an answer. Short or long. Whatever you want. But do we have an answer, really? If a machine tells us politely how to politely break-up with someone, will the person stop feeling bad, and politely walk away? Would his or her heart not break? Will you walk away feeling lighter and happier, with no baggage? Ghuntla adds, "When we start offloading complex emotions—jealousy, insecurity, conflict, heartbreak—to AI, we risk skipping the very processes that build emotional depth.

Sitting with confusion, reflecting on our reactions, having difficult conversations – these are not inefficiencies to eliminate, but experiences that shape empathy, resilience, and self-awareness.”Human relationships are complicated. They require timing, reciprocity and patience. They involve miscommunication, disagreement, and repair. AI removes all of that. With AI, there’s no fear of judgment. No risk of rejection.

No emotional cost for being repetitive, needy, or unsure. The chatbot listens endlessly and responds instantly. It adapts to your tone, your preferences, your worldview. This is intimacy without friction.

And that is precisely why it is so powerful for Gen Z. Because once people get used to relationships that demand nothing, real relationships can begin to feel exhausting by comparison. What’s most concerning is that the generation most in need of human connection may be the ones most likely to receive a synthetic substitute.

Instead of expanding social ecosystems, AI is risking becoming a parallel track that’s narrow in vision.Tech biggies are increasingly presenting AI as a kind of all-knowing voice, which can make it seem like it understands us better than we understand ourselves. This illusion is similar to the story of Narcissus, who became so obsessed with his reflection that he lost himself. Today, people risk doing something similar; mistaking the AI’s reflection for a real, independent mind.

Over time, this can make it harder to form genuine relationships, especially in a world that increasingly values speed and efficiency over meaningful human connection.

The loneliness paradox

The rise of AI companionship is also deeply tied to loneliness. If people begin substituting human interaction with AI, social skills can atrophy. The effort required to maintain real relationships may start to feel disproportionate. Gradually, a feedback loop forms: the more one relies on AI, the harder human connection becomes—and the more appealing AI feels.

It is not hard to imagine a future where loneliness is not just alleviated by technology, but quietly reinforced by it.It’s possibly one of the reasons why Slovenian sociologist, philosopher, and cultural critic, Slavoj Žižek, calls human engagement with artificial intelligence a “fetishistic denial”. It goes like this. The user knows they are not talking to a real person, yet they act as if they are to avoid the risks of real conversation.

This results in the erosion of the constitutive lie that characterizes social relations.

The rise of AI companionship is deeply tied to loneliness. If people begin substituting human interaction with AI, social skills can atrophy. The effort required to maintain real relationships may start to feel disproportionate, like in the movie, Her (in pic).

In human interaction, polite lies and the failure to express love perfectly are what adds sincerity to our personalities. It makes us human. But if an expression of love is perfectly declared by an algorithm, it becomes a flat, mechanical expression rather than a confirmation of truth. It’s a comforting illusion. And illusions are hard to break.

Possibly the reason why today’s generation is latching on to AI so hard.

But the problem with that, Žižek argues, is that people often undermine their own happiness by taking shortcuts. In the end, we end up damaging the very happiness we’re chasing.Then what does this dependence on AI really say about where we are at this point? While it is tempting to frame this as a story completely on artificial intelligence. It is not really.

It is a story about human needs in our times. Our minds are overwhelmed. We are all anxious, irritable and seem to develop a brain fog over at least 100 times in a day. In such a scenario, people are seeking connection in places that require too little of them.

Conversation has started to feel burdensome. The idea of being fully heard has become too compelling.We can’t blame just AI for creating this gap. But it did reveal it.

Algorithms did the rest. Then Big Tech offered a solution. Author Ted Chiang, American science fiction writer, takes this thought further. He worries that AI, while helping us navigate complex emotional problems, is also changing how we think about ourselves. He says, just as a brush or a car becomes an extension of the user, AI can shape our minds more deeply into the future.

Not far from now, instead of interacting with these systems, we will adapt to them.

AI will tell us about our feelings rather than the other way round – which is where we are right now. This is where it genuinely gets scary.

We don’t build relationships anymore, we consume it

We didn’t get here in a day. When we constantly consume short, fragmented content (Insta Reels, TikTok, YouTube Shorts), the brain adapts to it. Real life, and emotions are far more complex than what machine answers take us. The result of prolonged dependence on AI would be that instead of reflecting deeply, it starts working more like a quick signal processor.

American journalist and writer Nicholas Carr, who writes books on the human consequences of technology has a lot to say about this.

"Technology is rewiring human brains, shifting from deep contemplation to rapid, superficial information gathering”, he says in his book The Shallows. Carr adds, while previous technologies helped with memory, Generative AI is the first technology that replaces human thinking itself.

And that despite knowing the negative effects on our attention and minds, we are all at the moment driven by internet models to constantly use and rely on these technologies.Chiang ties this all up succinctly when he says the real problem, machines apart, is us. The humans who design them. We’ve built systems we often dislike and still depend on; almost like we’re trapped in a system of our own making. There’s a growing belief that technology can solve all human problems, and that efficiency matters more than human empathy or any feeling. This mindset can dull our awareness, putting us in a position similar to Narcissus—passively absorbed in a reflection, without fully realizing what’s happening to all of us.

6 hours ago

4

6 hours ago

4

English (US) ·

English (US) ·