ARTICLE AD BOX

Bengaluru: At Sarvam, the effort to build a 105-billion-parameter foundational large language model (LLM) was led by a team of just 15 researchers and engineers—many of them in their twenties and early thirties.

The effort was spearheaded by Rahul Aralikatte, a postdoctoral researcher at Mila - Quebec AI Institute, which is headed by Yoshua Bengio, a Turing Award winner. Aralikatte led the team’s work across data engineering, large-scale pre-training, evaluation frameworks and safety guardrails for the model. He holds 6 US patents and authored more than 30 peer-reviewed papers in journals and conferences, with over 1,000 citations.

Among the contributors were Sumanth Doddapaneni, a machine learning researcher at Sarvam, and a fourth-year PhD student (currently on leave) in the computer science department at IIT Madras. Doddapaneni managed the model’s pre-training runs and contributed to data engineering, while Mohit Singla and Aashay Sachdeva worked across data engineering and post-training. Anna Upreti focused on safety and alignment during the model’s post-training phase.

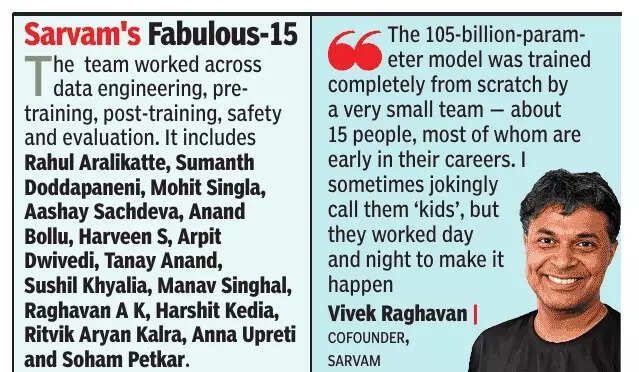

“The 105-billion-parameter model was trained completely from scratch by a very small team—about 15 people, most of whom are early in their careers. I sometimes jokingly call them ‘kids’, but they worked day and night to make it happen,” said Sarvam cofounder Vivek Raghavan. “What matters is identifying the people who can become 10x or even 100x engineers.”Sarvam has a team of 40 technologists. It describes itself as a full-stack GenAI company, with its work spanning the entire stack—from core models to end-user applications.

At the foundation, it trains models from scratch and enhances them through fine-tuning and reinforcement learning. On top of this sit harnesses and orchestration layers that allow the models to perform useful tasks. Sarvam also developed several product families, including Sarvam for Conversations, which enables AI-driven communication across India’s languages; Sarvam for Work, focused on enterprise workflows and internal process automation; and Sarvam Studio, designed to help create content in Indian languages and culturally relevant formats.

In addition, the company provides APIs for developers, with hundreds of companies and developers already using them to build their own AI-powered products.Raghavan added that two factors tend to attract talent to Sarvam. “Many of them feel strongly about building technology for India’s needs. And when you work at a company like Sarvam, you can contribute across a much larger scope of work. At a large multinational company, you might only work on a small piece of a much bigger system,” he said.

India’s twenty-somethings are fast emerging as AI natives, mirroring trends seen in the West, where young founders and developers are building cutting-edge AI start-ups such as Mercor and Cursor—many of them led by Indian-American entrepreneurs.

Commenting on India’s AI talent, Manish Gupta, senior director at Google DeepMind, said teams in India are driving research to make AI models more efficient while better reflecting the country’s linguistic and cultural diversity.

“We are inspired by how Indian innovators used these capabilities to build indigenous solutions. It is exciting to see local innovation thriving in India as we continue to advance the frontiers of general reasoning with Gemini and our other foundational models,” he said.

The team worked across data engineering, pre-training, post-training, safety and evaluation. It includes Rahul Aralikatte, Sumanth Doddapaneni, Mohit Singla, Aashay Sachdeva, Anand Bollu, Harveen S, Arpit Dwivedi, Tanay Anand, Sushil Khyalia, Manav Singhal, Raghavan A K, Harshit Kedia, Ritvik Aryan Kalra, Anna Upreti and Soham Petkar.

4 days ago

4

4 days ago

4

English (US) ·

English (US) ·