ARTICLE AD BOX

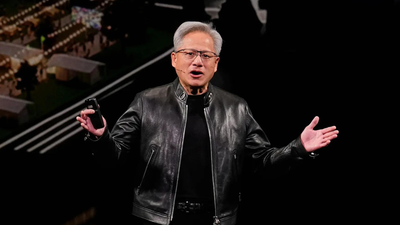

How Nvidia's Vera Rubin platform deploys asset specificity, software lock-in, and two decades of developer infrastructure to build a structural competitive advantage that chip benchmarks alone cannot capture. At GTC 2026, Jensen Huang raised Nvidia's revenue projection to $1 trillion in purchase orders through 2027, doubling the figure from twelve months earlier. The revised number reflects a thesis Nvidia has been executing for nearly two decades: that owning every layer of the compute stack produces a compounding structural advantage that competitors cannot close by building a faster chip.

Seven chips, one system

The Vera Rubin platform, announced March 16, comprises seven purpose-built chips across five rack-scale systems, scaling to 40-rack pods delivering 60 exaflops of compute. Each of Vera Rubin's seven chips serves a defined, non-interchangeable function: the Rubin GPU for large-scale training and inference, the Vera CPU for workload scheduling and agentic control, the Groq 3 LPU for low-latency decode-phase inference, and NVLink 6 switches, ConnectX-9 NICs, BlueField-4 DPUs, and Spectrum-6 Ethernet completing the interconnect fabric.

Substituting any single element degrades co-design benefits across the whole stack. Migration to a competing platform requires re-engineering workloads, retraining operations teams, and surrendering performance gains that exist specifically because every component was designed to interact with every other. Oliver Williamson's transaction cost economics holds that vertical integration becomes rational when assets are highly specific, meaning their value is significantly higher inside a particular relationship than in any alternative use (Williamson, 1985). Nvidia has engineered precisely this condition into its customers' infrastructure decisions. Huang stated it plainly at GTC: "When we think Vera Rubin, we think the entire system, vertically integrated completely with software, extended end to end, optimized as one giant system."

Value chain coordination and the software layer

Porter's value chain framework (1985) holds that competitive advantage accrues through the coordination efficiencies between activities, not from any single activity in isolation. The competitive significance of this architecture compounds through software. Dynamo 1.0, released at GTC as the inference orchestration layer, routes prefill tasks to Vera Rubin GPUs and decode work to Groq LPUs in parallel, producing a 35x improvement in tokens-per-watt at premium inference tiers. Underlying this is a twenty-year developer base: CUDA now runs on more than 500 million installed GPUs, and the developer community grew 150% between 2020 and 2025, giving Nvidia 86% of data center GPU revenue in 2026. A vendor selling GPUs into a heterogeneous stack cannot replicate performance gains that depend on co-designed chips, interconnect, and orchestration software operating as a unified system, nor can it quickly close an ecosystem gap measured in decades of accumulated developer tooling, libraries, and institutional familiarity.

The investor implication: Platform economics over chip cycles

Vera Rubin NVL72 delivers 10x higher inference throughput per watt at one-tenth the cost per token versus Grace Blackwell. With Groq, throughput at the $45 to $150 per million token premium tier improves by 35x. With data center power capacity largely fixed, tokens-per-watt determines infrastructure revenue potential, and Nvidia's platform leads on that metric.The pattern here is familiar. Durable returns in platform markets accrue to firms that make their infrastructure the default operating environment for the ecosystem around them.

The enterprises committing multiyear budgets to Vera Rubin are ratifying exactly that position. From a investor valuation standpoint, that distinction matters: terminal value models built on chip cycle assumptions systematically underweigh the compounding value of the ecosystem; and the shift to vertically integrated systems necessitates a move from traditional hardware replacement models to perpetual infrastructure annuity models in DCF valuations.

The relevant comparable is Visa. Visa's position rests on the network through which transactions flow, the developer infrastructure built on top of it, and the cost to any participant of rebuilding outside it. Nvidia's CUDA ecosystem, software stack, and vertically integrated hardware operate by the same logic. The metrics that matter are developer base growth, inference workload attachment to CUDA, and the rate at which enterprises are committing fixed infrastructure spend to the platform.

At twenty years deep, the ecosystem has become the asset.

1 hour ago

3

1 hour ago

3

English (US) ·

English (US) ·